I see a lot of people trying to make sense of the roles they occupy in the cyber defence world. Asking yourself these questions is a good thing to do, primarily because the world's needs advance at a faster pace than our structures can adapt to it. Let's call this phenomenon structural lag ⌛.

One of the many ways in which structural lag manifests is through our persistent use of old lenses to understand new problems, like the complex challenges of security operations nowadays. These lenses were perhaps useful back in the day, but no longer serve to illuminate the way forward. In some cases, these lenses may not fully capture the complexities of an issue, potentially obscuring key aspects of the wider problem space. One such structural lag is the false Threat Hunting vs Detection Engineering dichotomy.

This saga has a lot of history, mainly fueled by the rise of both functions due to the increasingly complex threat landscape, real needs, and also a sprinkle of industry hype. There are good points made in many of the articles here and glimpses of knowledge that start pointing in the right direction, offering a more data-centric approach that is more conducive to our new reality. Some articles I've read over the years:

| Date | Title | Source |

|---|---|---|

| 02 September 2021 | 4 Differences Between Threat Hunting vs. Threat Detection | Watchguard |

| 26 October 2021 | Threat Hunting vs Threat Detection | ChannelProNetwork |

| 01 December 2021 | Threat Hunting vs Detection Engineering: what's the difference | MSSPAlert |

| 21 February 2023 | Threat Hunting vs. Threat Detecting: Two Approaches to Finding & Mitigating Threats | Splunk |

| 26 February 2023 | The dotted lines between Threat Hunting and Detection Engineering | Alex Teixeira |

| 17 May 2023 | Detection Engineering vs Threat Hunting | Danny's Newsletter |

| 19 May 2023 | Guarding the Gates: The Intricacies of Detection Engineering and Threat Hunting | CyborgSecurity |

| 08 June 2023 | Detection Engineering vs Threat Hunting: Distinguishing the Differences | CyborgSecurity |

| 01 November 2023 | Navigating the crossroads of Threat Hunting & Detection Engineering | Alex Teixeira |

The issue with this contraposition is that it brings the focus to a problem that doesn't help advance the state of the art in cyber defence. Here's what we are missing:

- Forget the "hunt vs. detection vs. intel vs. whatever" paradigm 🚫. Security processes are inherently intertwined. Ask yourself what problem are you trying to solve, what is your actual goal here? I say we are here to pursue higher systemic robustness where functions are operational "stages" that contribute to a bigger picture.

- A role doesn't exist in an essentialist manner, it is merely a construct 🚧. Roles like "hunter" or "detection engineer" do not predate the activities your SecOps space performs. A "role" is merely a way to define your focus areas. These areas form clusters of activities that become your standard priorities.

- Oftentimes there is no clear-cut division between roles in security operations ➗. Where does the work of a traditional L3 Security Analyst and an Incident Responder start and end? When is a Detection Engineer behaving as a DevSecOps Engineer and when as a Purple Adversarial Engineer? Is a Responder also a forensic analyst and incident manager?

- When you start with "roles" as your unit of comparison, you miss something important 🤹♂️: it's all about the interconnectedness of activities within a broader ecosystem, there are overlaps, and these constitute pivotal articulation points between functions, which is a good thing.

- It is not merely about roles and functions, it is about understanding what the actual problem space is 🔭. What question are you trying to answer?

- The problem space is clear: threat-driven or threat-informed defence 🚀.

- Threat-driven cyber defence is another way of saying Active Defence.

- Stop thinking roles, start thinking data, Active Defence is about crafting a threat-driven data pipeline that can deploy advanced countermeasures.

Why do we care so much what a "threat hunter" vs a "detection engineer" vs a "security analyst" vs an "incident responder" does? Is a threat hunter or incident responder just a glorified security analyst? Is a detection engineer just a glorified DevSecOps engineer or a supercharged security analyst with backend SIEM knowledge? Is a red teamer just a glorified pentester?

By talking in these terms, we are already missing the point! We have to take a step back and understand that role boundaries should be a byproduct of properly planned functional boundaries. Role boundaries should emerge as part of an orchestrated approach towards threat management with a serious focus on understanding threats.

The collection, processing and deployment of security controls deriving from your approach towards threat management is what dictates the functions that you need to succeed. These functions are meant to address classes of problems and become phases in the flow of your security operations.

Instead of fixed roles like hunting, detection, and intelligence, imagine a security pipeline where data flows seamlessly between stages with functions like acquisition, enrichment, analysis, and implementation.

Behind all this is the concept of a threat-driven cyber defence pipeline. This pipeline is a complex engineering effort that consists of phases that help understand and deploy better security controls. This threat-driven pipeline ultimately aims to protect the business from the risks posed by cyber threats to the many value-generating streams that justify our existence. Unless you are a cyber security company, CyberSec is an enabler of value and not a producer of value per se.

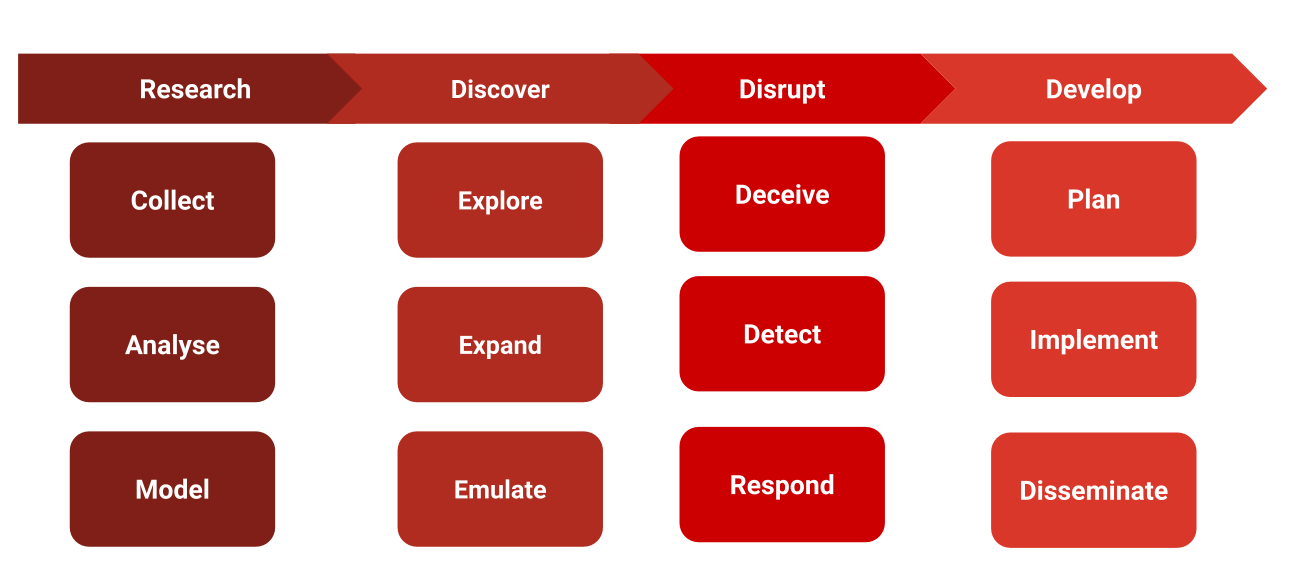

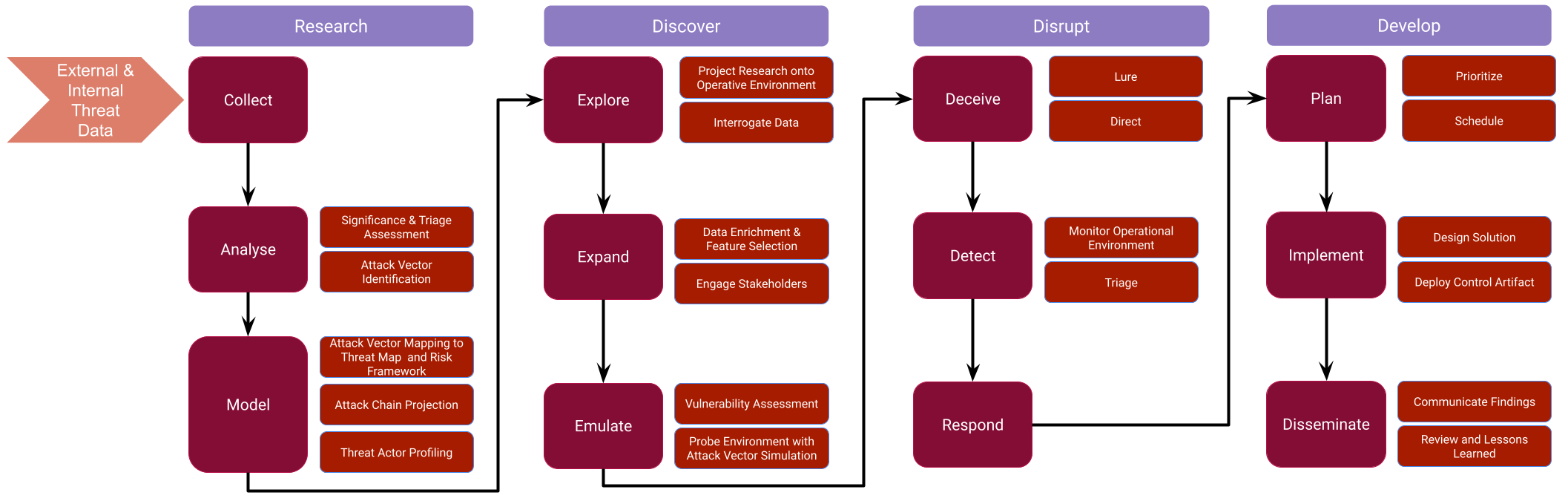

R1D3: Active Defence Pipeline

So what does this Active Defence Pipeline look like? At a very high level, it comprises four basic activities all cyber defence operations perform, knowingly or not: Research, Discovery, Disruption and Development. This is why I call it R1D3 (pronounced simply "RIDE" or if you are a Star Wars fan just say R1D3 as if you would call R2-D2 ⚛️).

Let's unpack this a little.

Research

The process by which you seek to understand the threats that may impact your business. This is achieved by actively collecting, triaging and synthesizing data about your internal and external threat landscape. The research phase is also where you assess the significance of threat events to your industry vertical and, more importantly, you model these threats in terms of discrete data points called threat maps (you know many threat map models already, MITRE ATT&CK, MITRE D3FEND, MITRE ATTACK FLOW, etc.). These threat maps adopt structured formats for sharing your research downstream.

Discover

The process by which you go beyond the analysis and start actively exploring your internal infrastructure and external perimeter to understand your exposure, readiness, control gaps and any instances of compromise. This is the phase where you project your initial research onto your real operational environment. This means your original data points will be transformed, because your operational environment has its own texture and nuances that abstract research doesn't expose. Original data points will go through a process of implicit or explicit feature selection and dimensionality reduction.

Discovery involves finding something new, illuminating connections, and forming novel understandings that truly bring your research to the concrete and practical terrain that is your organization.

Disrupt

The process by which you intercept and interrupt adversarial attack patterns. Disruption is about shattering adversaries' memorized patterns, destabilising and disorienting opponents, imposing high operational costs and creating crucial windows of opportunity for you to achieve defensive mission objectives. Disruption is usually comprised of deception, detection and response, i.e. all the different ways in which you can "engage" the adversary.

Develop

The process by which you ensure findings are communicated, controls implemented (and yes, a detector artifact is just another security control, the same as a decoy or lure are) and your attack surface is ultimately transformed. Transformation here means your ability to integrate learnings into wider structures and processes informing policies and procedures.

R1D3 Data Flow

The active defence pipeline ultimately leads to a more resilient security posture and better preparedness against evolving threats. We could further develop our pipeline into its constituent parts, I will share a diagram here but will leave the expansion of the topic for the next post:

If you've been following my posts and articles over the last three years, you know I've been preaching a straightforward idea: security operations is a data problem. The reality is that Hunting and Detection Engineering are phases of a bigger threat-driven strategy that has different operational aspects. Roles are merely constructs that represent units of work within functional boundaries. They have meaning within the context of your organization and sometimes the wider industry. However, roles and teams should NOT be the boundary lines where you start developing your strategy from. If you are doing this, you are already starting on the wrong foot! Start with data, start with intel, and start with defining your operational objectives.

The Active Defence Pipeline should be the start of your SecOps strategy, based on this you can start to trace the phases of your operations, then the functions and finally the roles. By proceeding this way, roles emerge as a logical outcome of your strategy, instead of the other way around.

I will expand on this pipeline in a future article because well... even I get tired of reading my own rumblings!

The Several Misconceptions about Threat Hunting

Many of the misconceptions about threat hunting arise from looking at it with a "detection" lens. This frames the conversation under wrong assumptions. Let's explore some of these assumptions.

Hunt is underdeveloped Detection 🚧

Hunting is not "underdeveloped detection", lazy or fuzzy query logic, that's just the classic view that hasn't evolved from old times. This is applying a basic detection lens to the problem. If we consider NISTv2 Framework (Identify, Protect, Detect, Respond, Recover), Hunt doesn't simply sit under detective controls, but can actually be useful to improve Protective controls, certainly has a role to play during response and it greatly helps identify risks and threats. The reason is simple: "hunting" for threats is nothing more than implementing a structured approach to threat-driven cyber defence, which for the most part is heavily based on threat intel.

Hunt is about "finding evil" 😈

As I have written extensively for the last two years, and as captured in aimod2, hunt's findings can be of multiple types:

- visibility gaps: logging or coverage deficiencies

- security control issues: lack of or weaknesses in protective, detective or mitigative controls

- detection opportunities: potential downstream artifacts consumable by detection engineering efforts

- suspicious security events: the stuff that leads to security incidents and your SOC or IR team getting involved

- intelligence events of interest: because hunt research can lead to enriching threat knowledge and threat actor profiling within your organization

- deception opportunities: points in the attack chain that are favourable to the deployment of lures and decoys in order to build deceptive narratives to elicit and direct threat actors down controlled attack paths

All these findings constitute "risks" that need to be categorized according to your organization's risk framework.

It is extremely rare that a hunt mission will yield NONE of the findings listed above. And certainly, Hunt is not merely finding "hands-on keyboard" malicious activities.

Hunting is about having random ideas and hypotheses 🎲

Threat Hunting is not just an analyst saying "I have an idea". It is about intel and an intel-driven pipeline with meticulous research and discovery.

When I say intel I mean an operative function that doesn't limit its focus to external threat actors and information provided by third parties about what's going on "in the wild", but a function that also understands that your environment is constantly radiating information that is raw data begging to be refined into insightful intel. And I'm not simply talking about your security incidents:

- Penetration Tests

- Red Team engagements

- Crown Jewel assessments

- Security Incidents

- Insider Threat information

- Cyber Deception decoys, lures and breadcrumbs

- Failed attempts and blocked attacks on your perimeter

Threat Hunting should focus on implementing efficient research pipelines that bring insight from intel teams and extend, refine and enrich their work with operative environment knowledge.

Threat Hunting is about Blue Team "stuff" only ☄️

Who said hunting is merely the art of "crafting SIEM queries" or "finding evil and raising incidents"? Hunt is after a full-circle approach where adversarial engineering plays an important role. If you are not working with, or in the capacity of a purple team and you are not performing attack vector discovery (adversarial emulation and/or atomic testing), then you are only getting one side of the picture.

- Say you receive an external threat report that says an attacker was able to register an App in your Azure Entra ID tenant, an App that had the

AppRoleAssignment.ReadWrite.AllGraph permission. The threat actor was able to use it as a backdoor to assign any roles to attacker-controlled users. You don't know whether you could have been impacted by the same or similar attack chain. - So you searched your SIEM logs and Entra ID Audit Logs and couldn't find any hits? Is that all your hunt mission is about?

- How about partnering with your Cloud Engineering Team/BU/SME to test this attack concept?

- How about running the required PowerShell tools to understand your Azure tenant and your attack surface?

- How about leveraging your pentest/purple/red team or the hunt team itself to run AzureHound and understand plausible attack paths?

I hope you see where I'm going...

Detection is Automated, Hunt is not 😕

Detection Engineering has a heavy DevOps component to help automate the Testing and Deployment of detectors to test / prod environments. This is true of Detection, however a well developed Hunt project also requires automation at some point of the workflow.

The main thing to automate in Threat Hunting is your research phase, you want to be able to produce semi-automated hunt packages:

- Using LLM to read and tag threat reports with MITRE TTPs

- Using extracted data points like TTPs to search libraries of available signatures that can help kick off the hunt (SIGMA, KQL, YARA, SNORT, etc.). These are just starters for your hunt mission.

- Utilize extracted data points to sweep GitHub in search for repositories that contain offensive or defensive tradecraft that relates to your hunt topic.

- Leveraging automation to sweep your Detection repository to identify existing coverage.

- Use semantic searches to pre-search SIEM schemas in an attempt to identify the data collections or indexes that contain data that is meaningful for your hunt project.

- Utilize all of the above information and the power of LLM to search for and build atomic red test devices to understand the adversarial aspect of your hunt.

- Fetch and process your Threat Intel Platform data to surface pre-existing campaigns, threat actors or information that relates to your current mission.

By the time the threat hunter receives the information, it already encounters a hunt package that has been enriched with automation and allows for creative and more nuanced approaches.

A Detection is an automated Hunt 😦🤯

When you create a new detector as a result of a hunt mission, you are not crystalizing a hunt, you are simply capturing one of many outcomes of a hunt research project into an artifact meant for detection.

Hunt is not a process that can be "automated" end-to-end, it relies heavily on creative and lateral thinking, situational analysis, operative environment knowledge and deep system/forensics/network knowledge.

Perhaps one day, when Quantum Computing merges with ML and we start seeing AGI, hunt, detection, response and everything else will be automated and we can sit and watch how offensive and defensive AGIs fight each other! 😹

Conclusion

Perhaps you are now more confused than when you started reading! If you ask me, that's a good thing. Sometimes the only way to get out of rigid constructs that limit our way of looking at a problem is by redefining our coordinates.

In case some things are not clear, here's a summary of the points I've made:

- ❌ Ditch "Hunt vs. Detect vs. Intel vs. Whatever" as a way to think about your SecOps: Security functions are stages in a bigger threat-driven system, not competing roles.

- ✔️ Focus on Problems, Not Labels: What problems are you solving? Start with threat-driven defence, this is your goal, don't start by asking people to define their job family and position description.

- ✔️ Roles are functional boundaries, not rigid and clear-cut categories: "Hunter" or "Analyst" labels define focus areas, not inherent identities.

- ✔️ Think beyond Roles, think Data, Knowledge and Insight: The key is crafting a powerful, data-driven threat defence system, we want to build data-driven pipelines to actively counter threats, you won't get there by merely contrasting "job descriptions".

- ✔️ Embrace Interconnectedness: Lines between roles like analyst, engineer and responder are fuzzy, reflecting real-world overlap. Functions may overlap and feed into each other, creating crucial collaboration points.